The core design principle is dual-perspective scenario simulation: players inhabit both the caregiver and the individual in need across the same narrative scenario. This forced perspective shift is the primary mechanism for building empathy — not through instruction, but through direct experiential modelling of a cognitive state that is otherwise inaccessible to observation.

Key simulation mechanics were designed to model dementia's cognitive fragmentation:

Memory fragmentation as a game mechanic — what appear to be errors or glitches are intentional representations of the way memory loss disrupts environmental coherence. Familiar objects and spaces behave unpredictably, not as a technical failure but as a designed simulation of cognitive disorientation.

Random disruptions — sudden scene changes, unexpected sounds, and fragmented dialogue simulate the involuntary, non-linear nature of memory loss. These are scripted stochastic events rather than random noise, calibrated to create disorientation without overwhelming the player.

First-person RPG perspective — players inhabit each character's viewpoint directly, rather than observing from outside. This removes the emotional distance that third-person framing creates.

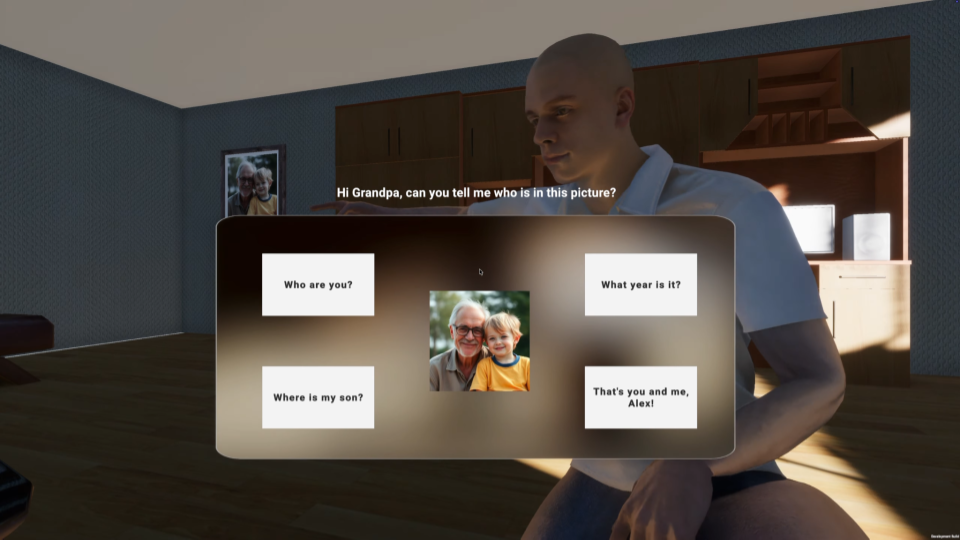

Player agency at the closing moment — the simulation ends with a meaningful binary choice: accept the responsibility of care, or walk away. This design decision ensures the experience demands an active response rather than passive observation, and generates data on player decision patterns for evaluation purposes.